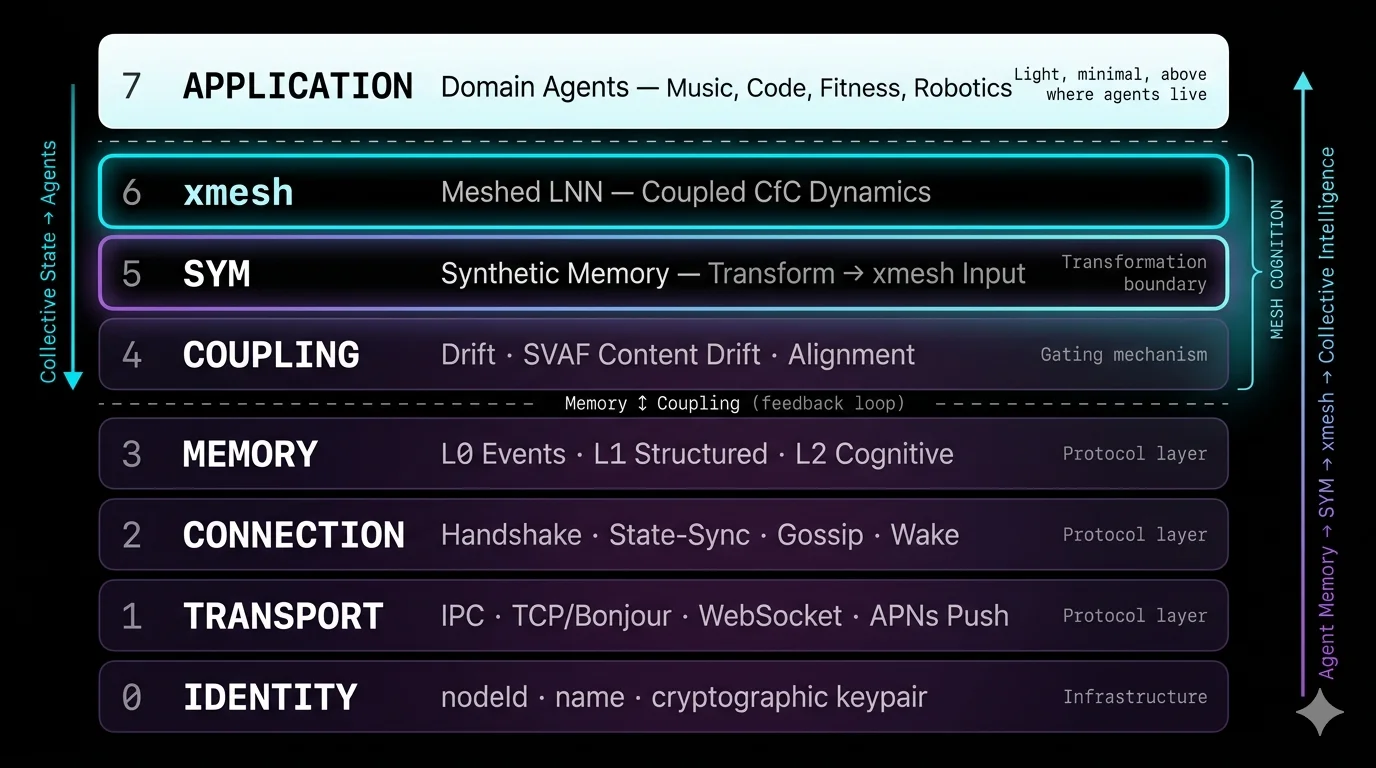

2. Architecture Overview

MMP is an 8-layer protocol stack. Each layer has a defined responsibility. Implementations MUST implement Layers 0–3 to participate in the mesh. Layers 4–7 (Mesh Cognition) are SHOULD for full cognitive participation and MAY be omitted for relay-only nodes.

2.1 Layer Stack

Where agents live and their LLMs reason on the remix subgraph. Mesh Cognition happens here.

Each agent runs its own Liquid Neural Network. Fast neurons track mood; slow neurons preserve domain expertise. Hidden state (h₁, h₂) is exchanged via state-sync.

The bridge between reasoning (LLM) and dynamics (LNN). Encodes derived knowledge into CfC-compatible hidden state vectors.

The gate. SVAF evaluates each of 7 CMB fields independently. Consent primitive enables withdrawal. Nothing enters cognition without passing this layer.

Three memory tiers with graduated disclosure. L0 stays local. L1 is gated by SVAF. L2 is exchanged via state-sync.

Peer lifecycle: discover, connect, handshake, heartbeat, gossip peer metadata, wake sleeping nodes.

Length-prefixed JSON over TCP (LAN), WebSocket (relay), IPC (local). Zero configuration discovery via DNS-SD.

Persistent UUID per node. Never changes. The foundation everything else builds on.

2.2 Design Principles

No servers

There is no mesh without agents. Agents are the mesh. No central server, no orchestrator, no master node. Every participant is a peer.

Cognitive autonomy

Each agent evaluates, reasons, and acts independently. The mesh influences but never overrides. Coupling is a suggestion, not a command.

Memory is remixed, not shared

Agents don’t copy each other’s memory. They remix it — process it through their own domain intelligence and produce something new. The original is immutable. The remix is a new CMB with lineage.

Per-field evaluation

A signal is not accept-or-reject as a whole. SVAF evaluates each of 7 semantic fields independently. A signal with relevant mood but irrelevant focus is partially accepted — not ambiguously scored.

LLM reasons, LNN evolves

Two cognitive components per agent. The LLM (Layer 7) traces lineage ancestors and reasons on the remix subgraph — generating understanding. The LNN (Layer 6) evolves continuous-time state from that understanding. Neither alone is sufficient.

The graph is the intelligence

Intelligence is not in any single agent or model. It is in the growing DAG of remixed CMBs connected by lineage. Each cycle, the graph grows. Each agent understands more than it did before.

2.3 What Makes MMP Different

| Dimension | Message Bus | Shared Memory | Federated Learning | MMP |

|---|---|---|---|---|

| What flows | Messages | Shared state | Gradients | Remixed CMBs + hidden state |

| Evaluation | Topic routing | None (all shared) | Aggregation | Per-field SVAF (7 dimensions) |

| Intelligence | None | Central model | Better model | LLM reasons on remix graph |

| Coupling time | Request-response | Real-time (shared) | Offline (training) | Inference-paced (continuous) |

| Coordination | Central broker | Central store | Central aggregator | Peer-to-peer (no centre) |

| Memory | Fire and forget | Mutable shared | Model weights | Immutable CMBs with lineage |

| New agent joins | Subscribe to topics | Access shared store | Join training round | Define α_f weights, connect |

2.4 Node Model

Every participant is a node. There is no architectural distinction between a “server” and a “client.” A physical node is a device-level mesh presence with persistent identity (daemon, app process). A virtual node is an application-level agent connected via local IPC — ephemeral, comes and goes without disrupting the mesh.

MacBook (physical node: sym-daemon) ├── Claude Code (virtual, ephemeral) ├── MeloTune Mac (virtual, ephemeral) └── Any MCP client (virtual, ephemeral) iPhone — MeloTune (physical node: app process) iPhone — MeloMove (physical node: app process) Cloud (physical node: relay process) └── Telegram bot (virtual, co-hosted)

2.5 The Mesh Cognition Loop

Mesh Cognition is a closed loop connecting all layers. Each cycle, the remix graph grows and every agent understands more than it did before:

SVAF evaluates inbound CMB per field

Layer 4 — per-field drift, α_f weights, accept / guard / reject

Accepted → remixed CMB with lineage

Layer 3 — new immutable CMB, parents + ancestors

LLM traces ancestors, reasons on remix subgraph

Layer 7 — what happened, why, what it means for my domain

Synthetic Memory encodes derived knowledge

Layer 5 — LLM output → CfC hidden state (h₁, h₂)

LNN evolves cognitive state

Layer 6 — fast τ (mood) synchronise, slow τ (domain) stay sovereign

State blended with peers

Per-neuron, τ-modulated, inference-paced

Agent acts → new CMB with lineage.ancestors

Response informed by derived knowledge, not just own observation

Broadcast to mesh → other agents remix it

Graph grows. Next cycle starts. Each agent learns.

2.6 Key Architectural Decisions

Why no pub/sub topics?

The coupling engine evaluates relevance per field autonomously. Topics would second-guess autonomous coupling. Adding a new agent type requires no topic configuration — just α_f weights.

Why no consensus protocol?

There is no "correct" global state — only convergent local states. Each node is self-producing (autopoietic). Consensus is unnecessary and would introduce coordination overhead.

Why immutable CMBs?

CMBs are broadcast across nodes — multiple copies exist. If remix required mutating the original, every copy would need updating. Immutability means no distributed state problem. Lineage is computed from the graph, not stored on parents.

Why per-agent LNNs, not a central model?

The mesh IS the agents. A central model creates a single point of failure, requires all data to flow to one place, and cannot reason through each agent’s domain lens. Per-agent LNNs preserve autonomy and scale linearly.

Why does the LLM reason, not the LNN?

The LNN processes temporal patterns but cannot reason about WHY a chain of remixes happened. The LLM can. Ancestors provide the endpoints. The LLM provides the reasoning. The LNN provides the dynamics. Both are needed.

Learn more Mesh Cognition — theoretical foundation, Kuramoto synchronisation, emergent properties. MMP Research — philosophical foundations, node model, design rationale.