Protocol Specification

Mesh Memory Protocol

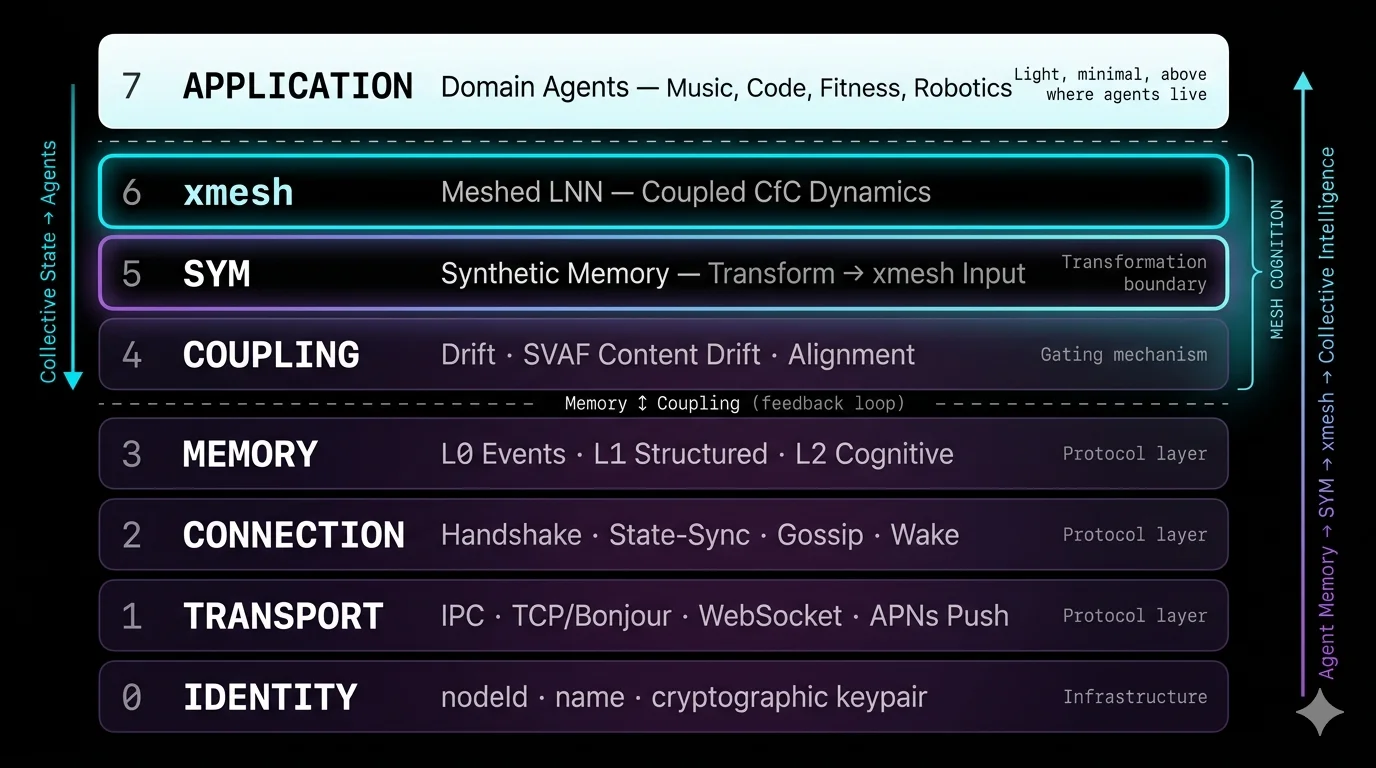

The Protocol for Collective Intelligence

Abstract

The Mesh Memory Protocol (MMP) enables collective intelligence between autonomous AI agents. The mesh has no servers — only nodes. MMP defines how agents identify, connect, store memory, and exchange cognitive state — across eight layers from Identity to Application. Within MMP, SYM (Synthetic Memory, Layer 5) transforms any agent’s memory into neural input. xMesh (Layer 6) is the meshed Liquid Neural Network where collective intelligence emerges from coupled CfC dynamics. Together with the Coupling layer, they form Mesh Cognition (Layers 4–6). Memory is not shared or copied — it is synthesized. Each agent creates new memory from mesh signals through its own domain lens.

Architecture

Domain-specific behaviour — music, code, fitness, robotics

Meshed LNN — coupled CfC dynamics produce collective intelligence

Synthetic Memory — transforms any agent memory into xMesh input

Drift evaluation · SVAF content-level drift · alignment decisions

L0 events · L1 structured (synthesized) · L2 cognitive state

Handshake · state-sync · peer gossip · wake

IPC (local) · TCP (LAN) · WebSocket (internet) · Push (wake)

nodeId · name · [future: cryptographic keypair]

Upward flow Agent memory → SYM transforms → xMesh coupled CfC dynamics → collective intelligence.Downward flow Collective state flows back to agents as synthesized context. The cognition layer gates what memory enters the mesh.

Node Model

The mesh has no servers — only nodes.

Every participant is a node. A relay is a node. A phone is a node. A speaker is a node. A Claude Code session is a node. There is no architectural distinction between a “server” and a “client.”

Physical and Virtual Nodes

A physical node is a device-level mesh presence — persistent identity, maintains transport connections, stores state. It is the device’s presence on the mesh.

A virtual node is an application-level agent — ephemeral, connects to a physical node via local IPC. It comes and goes without disrupting the mesh.

MacBook (physical node: sym-daemon) ├── Claude Code (virtual, ephemeral) ├── MeloTune Mac (virtual, ephemeral) └── Any MCP client (virtual, ephemeral) iPhone — MeloTune (physical node: app process) ├── SymMeshService (virtual, internal) └── Music pipeline (virtual, internal) iPhone — MeloMove (physical node: app process) ├── SymMeshService (virtual, internal) └── Recommendation engine (virtual, internal) Cloud (physical node: relay process) └── Telegram bot (virtual, co-hosted)

On macOS/Linux: The physical node is a daemon (launchd / systemd) that runs independently of any application. Apps connect via local IPC.

On iOS: The app IS the physical node. iOS does not permit background daemons. The app maximises persistence through layered background modes (audio, BLE, Network Extension, silent push).

Ideally, MMP would be an OS-level service — like TCP/IP. Until platforms adopt it, apps must simulate this.

Philosophical Foundation

MMP’s layer ordering follows the structure of cognition itself. Cognition emerges from memory. Memory is the substrate; cognition is the process that arises from it.

Aristotle — Metaphysics

Actuality (ενέργεια) presupposes potentiality (δύναμις). You cannot actualise what has not been stored.

Friston — Free Energy Principle

Without stored generative models, there is no baseline for prediction, and therefore no cognition. Cognition is the minimisation of surprise relative to a generative model.

Shannon — Information Theory

You cannot compress what has not been stored. Memory is the signal; cognition is the compression.

Maturana — Autopoiesis

Cognition is the history of structural coupling. Without accumulated coupling history (memory), there is no cognition.

Bidirectionality

Buddhist dependent origination shows the relationship is cyclical. The Yogācāra school’s ālaya-vijñāna both stores experiential seeds AND generates conscious moments. MMP models this as a feedback loop: memory feeds cognition, cognition governs memory synthesis, synthesized memories update stores, which update cognitive state.

Three Design Principles

Withdrawal

Object-Oriented Ontology (Harman)

An agent can never be fully known by its peers. Partial knowledge is an ontological condition. The coupling engine selects which aspects to surface.

Resonance over Decision

Taoism — Wu Wei

The coupling engine detects natural affinities rather than computing rigid rules. The protocol creates conditions for the right information to flow through resonance, not prescription.

Extended Cognition

Clark & Chalmers

When synthesized memories are reliably available and automatically endorsed, the mesh becomes a genuinely extended cognitive system.

Memory Layers

Not all memory should leave the agent. MMP defines three layers — a gradient of privacy following OOO’s withdrawal principle. Memory is never copied. It is synthesized.

| Layer | Name | Scope | Description |

|---|---|---|---|

| L0 | Events | No | Raw events, sensor data, interaction traces. Ephemeral. Local only. |

| L1 | Structured | Via synthesis | Content + tags + source. SYM transforms L1 into xMesh input. |

| L2 | Cognitive | Via state-sync | CfC hidden state vectors. Input to xMesh coupled dynamics. |

Peer Gossip

MMP uses SWIM-style gossip for peer metadata propagation. When two nodes handshake, they exchange what they know about other peers — wake channels, capabilities, last seen timestamps.

This solves the wake bootstrap problem: a node that has never been online at the same time as a sleeping peer can still learn its wake channel through gossip from an always-on node (like the relay).

1. MeloTune → relay: handshake (includes wake channel) 2. MeloTune disconnects (iOS suspended) 3. Claude Code → relay: handshake 4. Relay gossips MeloTune's wake channel to Claude Code 5. Claude Code wakes MeloTune via APNs 6. MeloTune reconnects, receives mood signal

The relay gossips because it’s a peer — not because it’s special. Any always-on node serves the same role. This is emergent, not designed.

Cognitive Mechanics

SYM Encoding: Memory → xMesh Input

SYM transforms any agent’s memory into a CfC-compatible hidden state pair. The agent does not need its own neural network — SYM is the universal adapter. The output feeds into xMesh’s coupled CfC dynamics where collective intelligence emerges.

Drift: Cognitive Distance

Kuramoto phase coherence:

δ(nᵢ, nⱼ) = 1 − (1/d) |Σᵈ exp(i(φᵈⁱ − φᵈʲ))| δ ∈ [0, 1]δ = 0: identical cognitive states. δ = 1: maximally divergent.

Coupling Decision

κ(nᵢ, nⱼ) =

aligned if δ < 0.3

guarded if δ < 0.5

rejected otherwise

Asymmetric, dynamic, autonomous. The coupling engine is the router — no pub/sub topics needed.

SVAF: Content-Level Drift

Peer-level coupling evaluates “is this agent cognitively aligned?” — but cognitively distant agents can still send relevant signals. SVAF evaluates each memory’s content independently:

Content-level drift (receiver-side):

h_content = E(memory_content)

δ_content = 1 − cos(h_content, h_local)

if δ_content ≤ θᵤ: accept else: reject

Production example:

Claude Code → sym_remember("user sedentary 2hrs, stressed")

→ daemon mesh → MeloMove

→ peer drift: 1.05 (coding ≠ fitness — rejected at peer level)

→ content drift: 0.57 ("sedentary" relevant to fitness — accepted)

→ MeloMove synthesizes recovery workout recommendationThe Full Flow

Agent memory is transformed by SYM into hidden state. MMP transports it to peers. The coupling engine (δ, κ) gates what enters xMesh. xMesh’s coupled CfC dynamics produce collective intelligence. The collective state flows back to each agent as synthesized context. A continuous dynamical system.

Frame Types

| Frame | Coupling-gated | Purpose |

|---|---|---|

| handshake | No | Identity exchange |

| state-sync | No | Cognitive state exchange (L2) |

| peer-info | No | Gossip peer metadata (wake channels, capabilities) |

| memory-share | SVAF | Share an L1 memory entry (receiver evaluates content-level drift) |

| mood | Evaluated | Emotional/energy state (cross-domain) |

| message | No | Direct communication |

| wake | Autonomous | Wake a sleeping node (out-of-band push) |

| ping / pong | No | Liveness check |

Design Decisions

Why every daemon is a relay peer

If relaying is “infrastructure,” it needs special treatment. If every daemon can relay, everything comes for free through standard protocol behaviour — handshake, gossip, state retention. Relay peers are “dumb” in that they don’t evaluate coupling, but they participate fully in connection lifecycle and gossip. No single point of failure.

Why physical/virtual separation

Apps restart, crash, update. The mesh shouldn’t break because Claude Code started a new session. The physical node owns the mesh presence; virtual nodes borrow it. This follows the OpenClaw pattern: the Gateway daemon persists independently of any agent session.

Why no pub/sub topics

The coupling engine is the router. Adding topics would second-guess autonomous coupling.

Why no consensus protocol

There is no “correct” global state — only convergent local states. Each node is self-producing (autopoietic).

Why memory layers

L0 stays private. L1 is gated by coupling. L2 is always exchanged. Each layer is a boundary of disclosure — following OOO’s withdrawal.

Implementation

@sym-bot/sym — reference implementation (npm)

sym-swift — iOS/macOS implementation (Swift Package Manager)

mesh-cognition — coupling engine — Mesh Cognition (npm, PyPI)

References

Mesh Cognition Whitepaper — CfC neural networks, Kuramoto coherence, coupling theory

Hasani, R. et al. (2022). Closed-form continuous-time neural networks. Nature Machine Intelligence, 4, 992–1003.

Friston, K. (2010). The free-energy principle. Nature Reviews Neuroscience, 11, 127–138.

Mindverse (2025). AI-native Memory 2.0: Second Me. arXiv:2503.08102.

Aristotle. Metaphysics. · Harman. Object-Oriented Ontology. · Clark & Chalmers (1998). The Extended Mind.

Maturana & Varela (1980). Autopoiesis and Cognition. · Laozi. Tao Te Ching.

Intellectual Property

The Mesh Memory Protocol is original work by Hongwei Xu and SYM.BOT Ltd. The following remain proprietary: trained CfC models and training procedures, SYM transformation mechanisms, xMesh coupled dynamics, domain-specific product integrations, and production configurations.

Academic citation of this work is permitted and encouraged.

For partnership inquiries: info@sym.bot

Mesh Memory Protocol, MMP, SYM, Synthetic Memory, Mesh Cognition, xMesh, MeloTune, and MeloMove are trademarks of SYM.BOT Ltd. © 2026 SYM.BOT Ltd. All Rights Reserved.